Overview

Our goal is to develop an advanced drone capable of autonomous flight, seamlessly responding to gesture-based commands from a ground-based pilot. The drone will interpret and execute these commands with precision, enabling dynamic and user-friendly control for a range of applications.

Implement wireless communication protocols to transmit precise gesture-based commands from a Ground Control Station (GCS) to the drone.

Approach

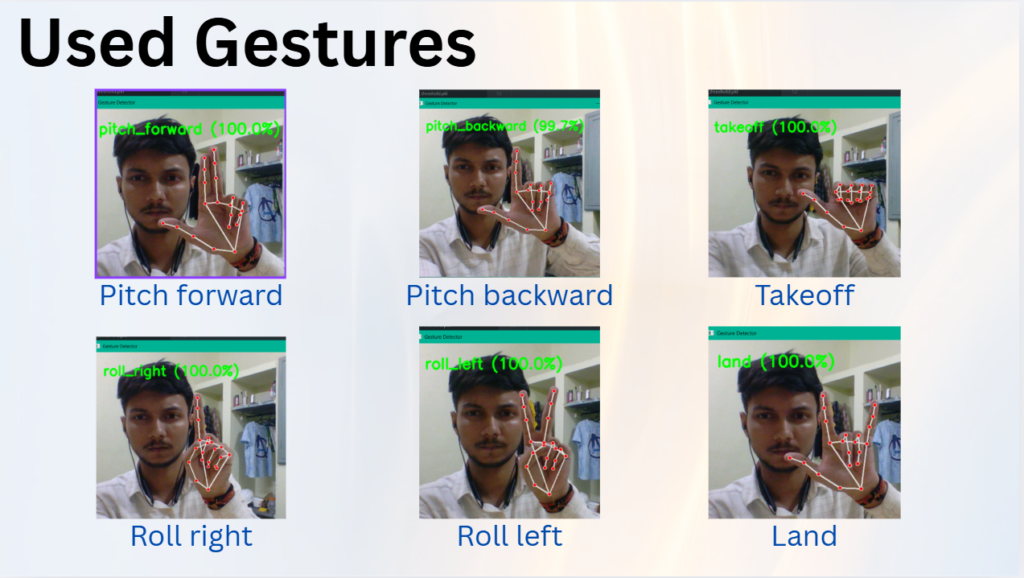

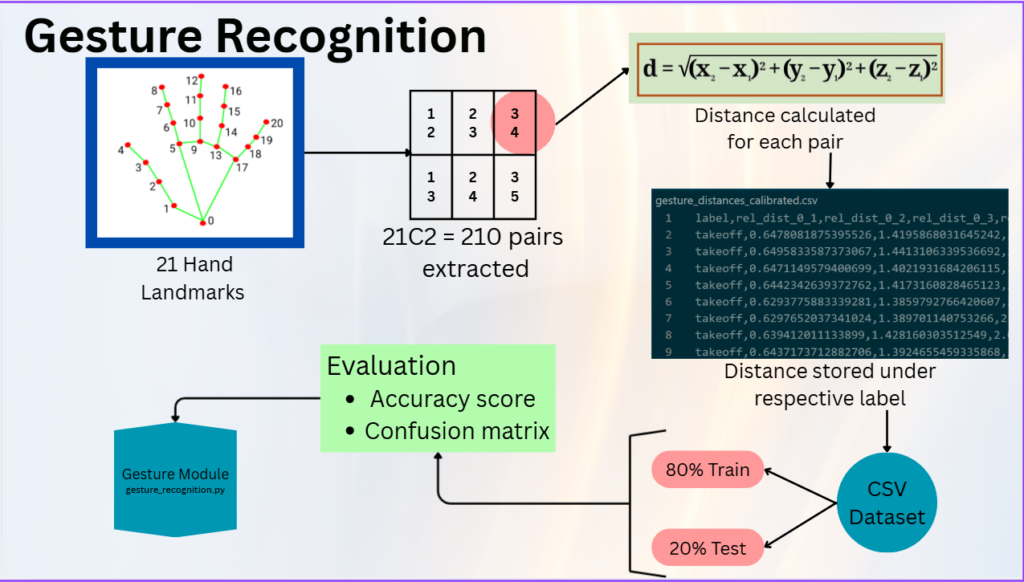

- Gesture recognition is done by training a ML model on a dataset containing labelled distances between all possible pairs of hand landmarks. The loading of hand landmarks and extraction of their x, y, z coordinates are done using the Mediapipe library, and RandomForestClassifier is used as the ML model for training.

- Using Mediapipe we extract the features of each hand landmark (its x, y, z coordinates). Then for all possible pairs of hand landmarks i.e. C(21,2) = 210 pairs, distances between them are calculated and stored in a labelled CSV dataset respective to a particular gesture. Before storing them the distances are divided by the width of the user’s hand (distance between landmark 5 and 17) so that the model becomes relative and not trained according to only one person.

- For each gesture we collect 5k rows of 210 distance data with a collection speed of 10 rows/sec. The training script loads the CSV file, labels the gesture strings (like “takeoff”) and maps them to integers, after which the data is randomised and split into 80% training and 20% testing data.

- Using StandardScaler, each feature is normalized with mean = 0 and standard deviation = 1. The RandomForestClassifier is trained with parameters such as 300 decision trees and a tree depth limit of 20. The model is evaluated using a classification report and confusion matrix, with a probability threshold of 0.8 where values below it are treated as “unknown”. Once trained and evaluated, the model is saved in .pkl format and used for prediction.

Key Modules

- Gesture Recognition Module

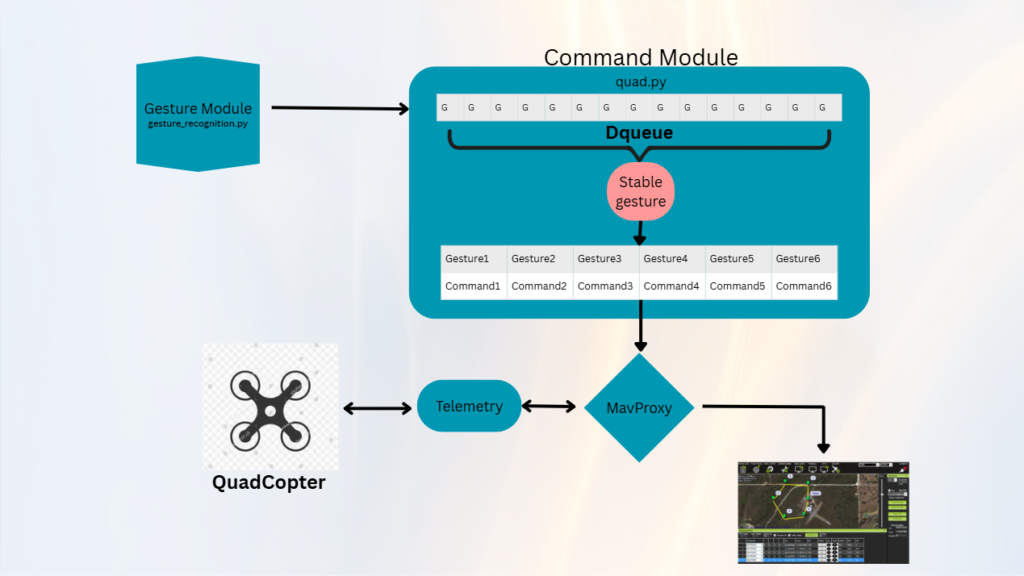

- The recognition code continuously detects gestures and sends them to the command execution code, which stores them in a deque of size 15 (first-in-last-out format). The most stable gesture is selected based on occurrence — if a gesture appears more than 70% in the deque it is treated as the stable gesture.

- The respective MAVProxy command for that gesture is then forwarded. This method improves stability and prevents fluctuations in gesture recognition which could otherwise lead to incorrect commands.

- Drone Command Execution / Connection Module

- The final script has two parts: the drone command execution/connection code and the gesture recognition code. Before running the code, using MAVLink the MAVProxy commands are forwarded to two UDP ports — one connects to Mission Planner and the other connects to the Python connection code.

- The connection code connects to the vehicle, performs security checks, and using multithreading runs the gesture recognition code in a separate thread. This separation ensures stability and prevents command delays.

- Two safety checks are implemented: during a change in stable gesture the drone pauses for 1.5 seconds, and before forwarding the land command it is ensured that the “land” gesture remains stable for at least 5 seconds.

Summary

- This system enables gesture-based control of a drone using computer vision and machine learning. Hand landmarks are detected using Mediapipe and converted into distance-based features that are normalized relative to the user’s hand width, making the model independent of individual hand size.

- A RandomForestClassifier is trained on the collected dataset and evaluated using classification metrics. During real-time operation, predictions are stabilized using a deque-based voting method before sending commands to the drone.

- The system communicates with the drone through MAVLink and MAVProxy while running gesture recognition and connection processes in separate threads. Additional safety checks such as gesture stability thresholds and timed confirmation for landing improve the reliability and safety of the drone control system.

Team Members

- Aman Sharma

- Harshit Raj

- Shivansh Satyam

- Shriya Nerlikar

Mentors

- Harshal Kohle

- Mrunmayee Limaye

- Sameer Badami

- Samiron Biswas

- Sanchet Dhalwar